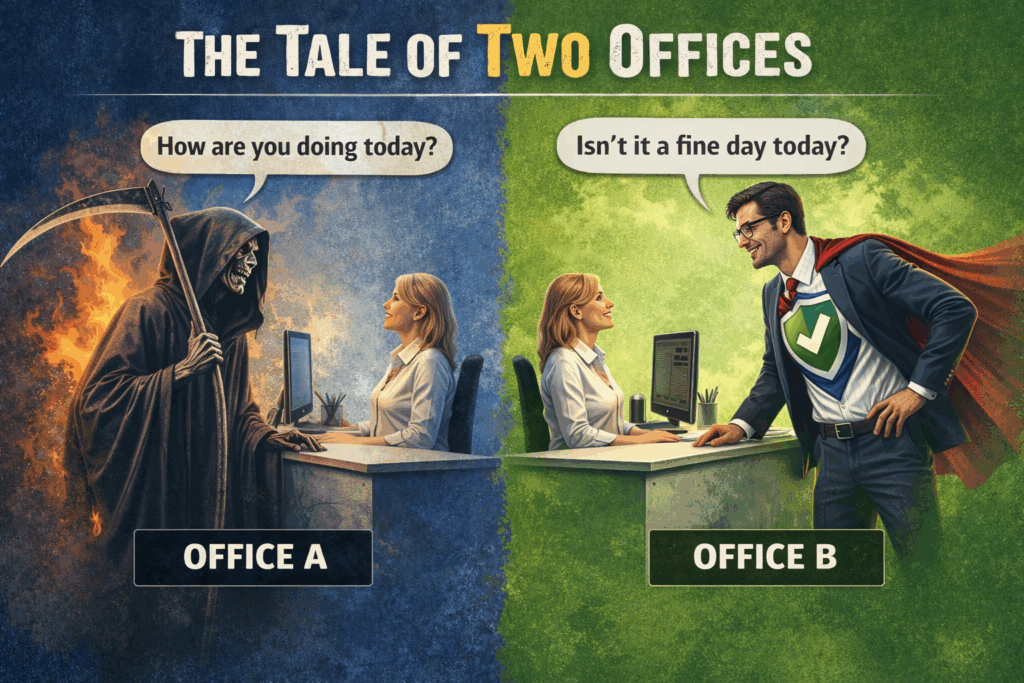

I’m going to keep this completely anonymized. No names to protect the innocent, just office A and office B.

This is a situation where I had to make a painful budget decision for my business, and I hope as the owner of a small business, it can help you in yours.

Because the point isn’t who they are. It is all about the mindset, and how one mindset books a disaster for a future date, while the other company works to make sure that day never comes.

The Two offices:

Office A: Lives by Subscription Disaster Recovery:

The new owners, Management Company, of office A, Office A my customer, management company refused to hardware refresh Office A systems, for two reasons. Reason number one, they are not making enough money to warrant an upgrade.

That was a real what moment? So, OK, in my head, when the IT manager of the management company was telling me this, I am thinking well if this workstation goes down it will cost you x $ per day down on a company you deem not lucrative enough to upgrade. If that happens, you are even further in the hole, and in what business universe does that make sense?

I politely said that to this manager – some young guy, you should look for a different job, or come work for me. He left the job a few weeks later I found out.

The second reason I believe they did not is because they could not – did not have the technical expertise. I base that belief on the condition of the network. My company, Arnold Consulting, also does high level network infrastructure configuration and network design.

We built and configured that network, and it ran flawlessly for years, for 15 years’ worth of upgrades, never down. Upgrading domain controllers on the fly while people are working on the network.

After a while the domain became so degraded, backups would fail to find the network pathing, and the BDR, Backup Disaster Recovery Server, on site began to fail. It is an either lack of man power for maintenance, or lack of know-how. I lean to a lack of know-how, due to the many changes made that produced the instability, and rendered basic network activity broken.

The Disaster Recovery Paradox for Company A:

The underlying belief is that disaster recovery could be bought like a subscription plan. In their world, you pay monthly, you keep the old systems running longer than they should, and if something goes wrong you call the provider, and it gets fixed.

The thinking sounds good on the surface, “we got you,” however, it is a dangerous belief, because disaster recovery is not a force field and it’s not magic. It’s what you reach for after something happens, not something that makes a fragile environment safe to run indefinitely. Disaster recovery is about systems yes, but it is also a philosophy, and a set of understandings.

The Philosophy of Disaster Recovery – No be There:

Backups exist for a reason. When primary systems fail, from hardware breakdown, corruption, human error, or a security event, you need a way to get your stuff, expensive information stuff back.

Disaster recovery is a safety net, not an I will run my system into the ground, and let them fall into a black hole and spaghettify into oblivion burn.

As systems get very old, recovery also becomes a supply-chain problem: the exact hardware you’d need to restore or rebuild may no longer be available, and even “spares” you bought ahead of time can be exhausted. That’s why the goal is always to keep the primary environment healthy and supported.

You want to run on primary systems, because primary is where the steady state is. When you’re living on recovery, you’re already in a problem.

It’s also important to understand that backup systems can fail even when they’re monitored, and periodically tested. Software is made by deeply flawed people, and the software lives by rules we design – storage, credentials, and changing environments.

Operating system updates, vendor changes, security hardening, storage degradation, repository capacity issues, configurations, and even malicious activity can introduce failure modes that didn’t exist at the last test.

Disaster recovery is as much practice – As it’s a philosophy:

Most people hear “Disaster Recovery” and think of backup. That’s like thinking a seatbelt is the whole safety plan of the car. Backups matter, and yes, restores matter, but that’s the last effort.

Disaster recovery is a philosophy. Don’t create the conditions where your business has to limp along on emergency life support. Backup is your fallback, not the operating model. If your plan is basically “we’ll call IT when it breaks,”

“That’s not resilience, that’s just scheduling the next outage without checking with HR first.”

It’s all about training people not to click the “urgent invoice” from a sender they’ve never heard of, and designing the network so one stupid moment doesn’t turn into a serious network down event.

In twenty years, I’ve restored failed systems, dead drives, and the occasional did, “I delete that whole directory.”

The “Philosophy” is doing the things as an MSP you know to do. It’s all about the mundane:

- Patch Management

- Endpoint Protection

- A Good Professional Perimeter Firewall

- Appropriate Admin Permissions,

- Internal Habits That Don’t Invite Malware

- Timely Hardware Refresh

The offices that avoid true disasters aren’t lucky. They follow the precepts of disaster recovery the way it’s meant to work, and I run their networks accordingly. Have there been incidents? Yep! Hardware dies and sometimes a same-day replacement isn’t possible.

In all of the 20 years I have been doing this, I haven’t watched one of my clients get taken out by a preventable breach or a full-blown, multi-day meltdown, because this philosophy works when applied correctly.

My customer’s treat prevention like a religion because ignoring basic hygiene isn’t a business model. Businesses I manage understand this has to be part of daily operations, because when payroll is due and the server dies, the machine doesn’t care that it was, “a bad time.”

“The system didn’t get the memo, and the bill always comes due… with penalties and pain.”

Company A has a date already booked with the reaper.

Office B lives by Disaster prevention – Downtime hurts cost money = Bad:

Office B:

Office B also has aging systems, but the mindset is the very opposite. Office B understood that old systems eventually stop being stable and start being fragile. They upgraded and refreshed all their servers and workstations.

Office B also understood a truth that too many businesses avoid. If your business runs on systems and those systems go down, you don’t make money. Not less money, no money at all. When core systems fail, operations stall, staff sits idle, customer confidence erodes, and a small “computer problem” becomes a big business issue.

Does that mean Office B never has problems? No, Hardware fails, people make mistakes, and software still has its days. However, they’ve done everything reasonable to avoid a truly preventable disaster and preventable is the operative word.

Backups Do Fail and Why is That:

One of the hardest things for business owners to understand is that backups aren’t binary. People assume you either “have backups” or you don’t—and if you do, you’re safe.

In the real world, backups are a system, and systems can fail. That doesn’t mean backups are pointless, however it means backups are a layer, in the Disaster Recovery protocol.

A backup isn’t real because a job ran and a report said, success. A backup is real when you can restore it, and the truth is that there are real, professional reasons, why backups fail, even when a MSP is doing things correctly.

Sometimes, a backup job can technically succeed while the resulting backup is unusable. Tools may report success because data was written somewhere or a snapshot completed, but that doesn’t automatically prove recoverability.

Sometimes the data wasn’t captured in a application-consistent state, a database wasn’t properly handled, a chain is incomplete, or the restore process fails when you actually try to bring a system back to life.

Even when the network system feels stable, operating systems update components, applications change structures, databases are in motion, security tools evolve, and background services behave differently after patches.

Those changes can create edge cases locked files, interrupted snapshots, authentication issues, or backup agents that suddenly can’t do what they did last month.

Vendor updates can also cause issues. Microsoft can modify core services, drivers, or security requirements. Backup software vendors can release improvements that introduce side effects in certain environments. Most updates are good, but occasionally a small change creates a failure mode that didn’t exist the week before.

Testing Backups and use Cases:

Even with good discipline, there’s also always a window between verification tests. I do test restores, and I do verify, however very few people ever catch things in real time.

Changes happen between test cycles. A configuration change, a policy update, a permissions shift, a cleanup of storage, or an unexpected software change can break a chain that was working yesterday.

That’s not an excuse; it’s reality. Professional IT is not pretending risk is zero. Professional IT is reducing risk and building layers so one failure doesn’t become a business-ending event.

Primary systems are where your stability is, and the entire goal is to keep the business operating there. Backups are absolutely your fallback position, but they’re a safety net. They are something you test, verify, and you understand can fail too, for real reasons.

That’s why primary health matters so much, and why real disaster recovery is a layered, systematic approach. Recovery is the last line, not the first plan.

Conclusion:

This is the Intellectual split between the two offices:

• Office A uses disaster recovery as an excuse to keep running antiquated systems indefinitely. At some point, the plan starts to look like an abacus with Ethernet.

• Office B treats prevention like the full treatise it is. Supported systems, disciplined patching, security controls, and backups that are verified, not just, it ran, so when something breaks, it’s a contained incident, not a sci-fi chain reaction.

I let the Customer go $14,000.00 gone out of the MSP budget:

So, I drew a boundary that every MSP eventually has to learn to do. I will own outcomes when I can reasonably control the variables, hardware refresh being one of them.

If an office refuses lifecycle upgrades, patching, basic hygiene, and still wants the comfort of paying for support. What they are really buying is a relationship where the IT provider becomes the future fault.

I’m not interested in that business model, and that’s why I walked away from $14,000 per year. Not because the work wasn’t valuable, and not because the relationship was hostile. The mindset is unsustainable, and eventually, it would have become a disaster.

“Disaster recovery is not just about backups. Backups are the last layer, not the first plan.”

Disaster recovery is a stack of decisions you make before anything breaks, and realistic expectations for what recovery and fault tolerance actually looks like.

A good provider doesn’t promise backups can never fail. A good provider builds defense in depth so that a failure doesn’t become catastrophic, and then pushes hard for the one strategy that beats everything else. Don’t live in a disaster scenario – no be there.

Office A bought disaster recovery as a subscription plan and treated it like insurance that replaced responsibility. Office B didn’t want disaster, so they refreshed before failure made the decision for them.

If you’re running a business and your entire plan is, we have an IT guy, you don’t have a plan you have hope. Hope is not a strategy. The best disaster is the one you never have, and that’s why I walked away.

Rick Arnold – Arnold Consulting

Side Project – Vintage Antique Crystal and pressed Glass :

Bio-Note:

When I’m not running Arnold Consulting, I also have an Etsy shop called Vintage Uranium Vault, where I document antique crystal and glass and re-home pieces to buyers who appreciate a craft that is not coming back. Especially the increasingly rare art of making and cutting leaded crystal. If you’re hunting a unique gift—or a piece for your office that looks sharp and starts conversations—you can browse the shop here: [https://www.etsy.com/shop/VintageUraniumVault?ref=lp_mys_mfts Thanks for taking a look!

Rick Arnold – Arnold Consulting